First freely available multi-sensor data set for machine learning for the development of fully automated driving: OSDaR23

In the future, fully automated driving at the GoA4, sensors on the train ensure the perception of objects and obstacles in the track environment. The sensor data is evaluated using AI models based on machine learning (ML). The reliability of a AI model depends on the amount of data used for training and therefore also on the availability of viable data sets. Together with the German Center for Rail Traffic Research (DZSF), DB Netz AG has now created and published the first such data set for the rail sector as part of the sector initiative Digitale Schiene Deutschland.

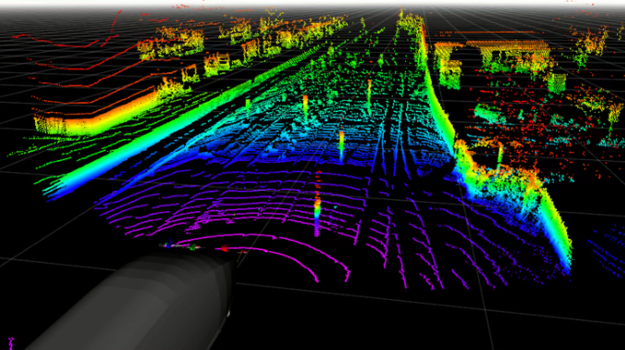

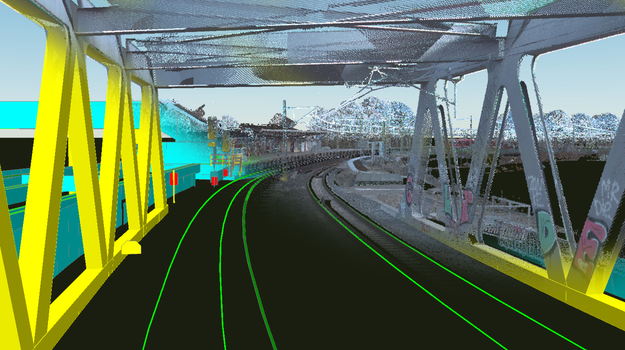

Fully automated driving will involve technical systems taking over tasks previously performed by the train driver, including route monitoring. Sensor-based perception systems are being developed for this purpose, which perceive objects in the train environment. These systems consist of various sensors, which are usually mounted at the front of the train. These should provide reliable images of the surroundings under all visibility and weather conditions (day, night, rain, etc.). For this purpose, a multi-sensor configuration consisting of medium and long range cameras, infrared cameras, LiDARs (Light Detection And Ranging) and radars is usually used. AI software subsequently interprets the environment captured by the sensor data.

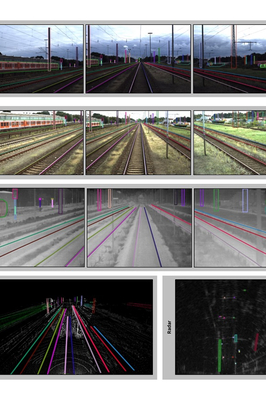

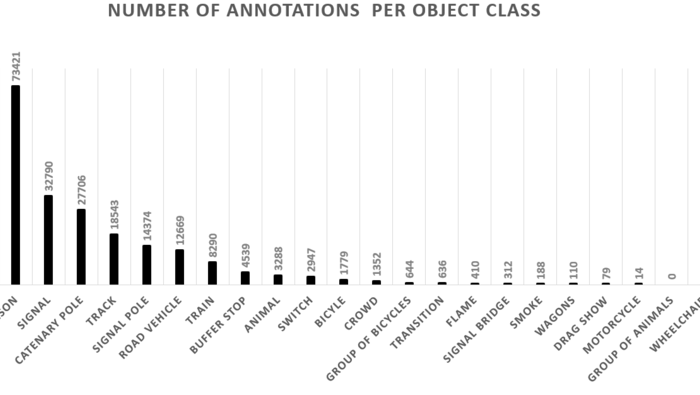

AI software that can interpret the data from such a multi-sensor configuration is based on machine learning (ML) and needs to be trained with a very large amount of sensor data. This data is "annotated"; Annotations are labels in image regions showing the objects to be trained, for example rails, catenary poles, persons, etc. (see Figure 1). Until now, public data sets from the railroad sector are very rare, which is why DB Netz AG, within the sector initiative Digitale Schiene Deutschland, and the German Center for Rail Transport Research (DZSF) at the Federal Railway Authority (EBA) have created the first publicly available multi-sensor data set OSDaR23. The sensor dataset annotated as part of the project was recorded in several data collection runs in Hamburg by Digitale Schiene Deutschland. The dataset contains regular railroad environments and tain operating situations as well as some special situations and objects (like flames and smoke), which were posed.

The sensors were mounted on the top of a track work vehicle and calibrated to each other. Figure 1, top left, shows the mounting of the sensors on the top of the vehicle. Data from the following 24 sensors were recorded with an acquisition frequency of 10 Hz and time synchronization (see Figure 2):

- 3 high resolution cameras, 3 medium resolution cameras, 3 infrared cameras.

- 3 long-range LiDARs, 1 mid-range Lidar, 2 short-range LiDARs

- 1 long-range radar

- 4 inertial measurement units, 4 GPS/GNSS sensors

Furthermore, an annotation specification was developed that describes the used annotation types and geometries and the object classes to be annotated. The data is stored in the annotation format ASAM Open LABEL.

Following this specification, the company Fusion Systems GmbH has annotated about 204,000 objects from 20 different object classes in the sensor data (see Figure 3).

The multi-sensor data set OSDaR23 will be used in the future in the Data Factory of Digital Rail Germany to train AI software for environment perception. In addition, further annotated multi-sensor datasets will be created, which will be available via the Data Factory. A detailed description about the recordings, the contents and the annotations of the dataset are published in our technical article in the Eisenbahntechnische Rundschau 04/23.

The multi-sensor data and the associated annotations contained in the OSDaR23 dataset can be downloaded from the following link:

[ref: https://doi.org/10.57806/9mv146r0]

For easy use of the dataset, DB Netz AG has also published a suitable Python software development environment:

[ref: https://github.com/DSD-DBS/raillabel]

To visualize the dataset, the WebLabel Player of the Vicomtech Research Foundation can be used: [ref: https://github.com/Vicomtech/weblabel]